SUNDAY, 11 MARCH 2012

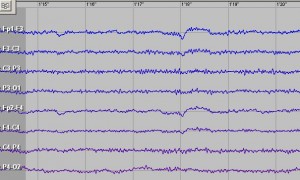

The researchers studied 15 epilepsy patients undergoing brain surgery in an attempt to localize seizures. The surgery involved placing up to 256 electrodes on the surface (cortex) of the temporal lobe, which houses the brain’s auditory centre. The Berkeley neuroscientists recorded brain activity from the electrodes as the patients listened to 5-10 minutes of conversation.First author of the study, Brian Pasley, then used two computational models to match the electrode activity to spoken sounds, and used these to predict the words that the subjects had heard. The better of the two models allowed Pasley and his colleagues to correctly guess many of the words from the recordings.

Previous work with neuroprosthetics has proved successful in controlling movement via brain activity, but not in the reconstruction of language. The present analysis, which allows for reproduction of the sounds heard by the patient and some (albeit imperfect) recognition of words, has shed new light on how speech is represented and processed within the human brain. Such work may eventually lead to technology that will enable even the most severely disabled patients to communicate.

Written by Mrinalini Dey

doi:10.1371/journal.pbio.1001251